- Introduction to Java Threads

- Application without multithreading

- Application with multithreading

- Java Thread creation

- The Java thread’s life cycle

- Synchronization in Java

- Summary

Introduction to Java Threads

Being a Java programmer, most of us have had any contact with multithreading. One of the biggest challenges is to properly synchronize the code between threads. Fortunately, the language developers have prepared for us a full set of tools that help us achieve this goal. If you are curious or you are preparing for an interview - read this post.

Application without multithreading

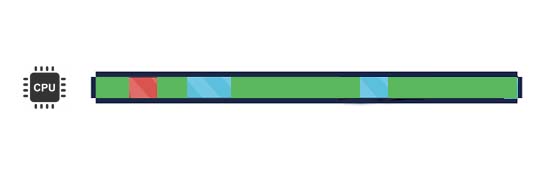

Single-threaded programs are simple applications that do not require concurrent execution of tasks. Each of the tasks is run after the previous one is finished. It looks like this:

These rectangles represent three tasks to be performed. The length of the rectangles represents the duration of each task. The tasks are started one by one - after the green task ends, the red task begins. You can say that the tasks are executed sequentially.

Application with multithreading

What is a Java Threads

The threads are a way for the processor to do many things at once. At a given point in time, the processor can only do as many instructions as it has cores (it’s more complicated, but this simplification will allow us to explain more easily). This would mean that you could only have as many programs running at once as you have processor cores - usually two or four on modern computers. To work around this limitation, the processor runs on threads - one program is one (or more) threads.

From the developer’s point of view, a thread is a set of instructions that he/she is going to write in the application, and execute in a certain way. An application itself can be composed of several threads and different threads can be executed at the same time.

What is Time Slicing

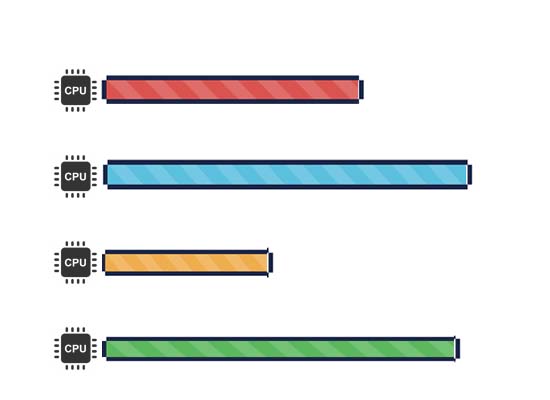

Going further, does that mean we can only run one thread on a single-core processor? And on a dual-core processor, two threads? Not exactly. This problem was solved by creating a time-slicing mechanism. A mechanism that allows one processor core to run multiple threads. However, this does not happen in parallel. The image below shows the tasks from the previous point. This time each of them is run in a separate thread, so we have three threads. The mechanism that supervises their work (Thread Scheduler) ensures that from time to time the current thread is stopped. Another thread is woken up, gets processor time and is executed by it. This is known as Context switch. The sum of the lengths of rectangles in a given color is the same as in the previous example.

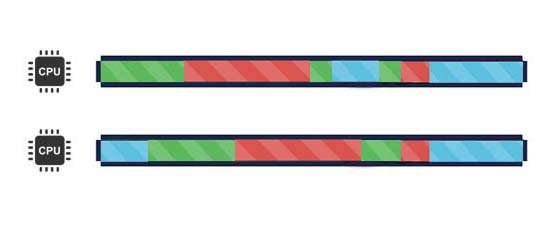

It should be remembered that such an approach does not speed up the execution of tasks (it takes time to stop and wake up the threads) - but it does allow to process each “rectangle” a bit. For what purpose? Imagine such tasks:

The green rectangle is a task to open the browser with the last tabs saved (one million tabs). In the meantime, the user presses the night-mode button, which is a very quick task (red rectangle). Unfortunately, it has to wait until the browser is finished opening tabs. Only then will the next task be executed. The same task can be split, and even though each task will take the same amount of time - the user will start the night-mode much faster. He will not have to wait for the browser to open:

This approach avoids starving the threads. In the above example, without time slicing, the thread with the green task would starve the threads with the blue and red tasks.

Multi-threading on multicore processors

Multi-core processors give you the real ability to run multiple tasks in parallel. In this case, if each of the tasks is run in a separate thread, the situation looks like in the picture below:

In your applications, you’ll meet a combination of both approaches. The image below shows an example of how the task is executed on two cores.

Concurrency vs Parallelism

As I mentioned earlier, parallel tasks are only possible on a multi-core CPU. It is important to remember that

When there is just one processor, the OS scheduler context switches between different threads to provide concurrent execution.

and

When there are multiple CPUs, each CPU essentially runs an instance of the OS scheduler, thereby executing threads that are waiting to be run. The result is parallel execution of the set of threads to be executed.

Concurrent data processing

The threads use the same data (they share the address space). This means that objects available for one thread are also visible in other threads.

Variables are available for all threads. Therefore, all threads can modify these variables. This has very serious consequences. I will describe them in more detail later in this post.

Java Thread creation

Every thread in Java is related to the Thread class. There are several ways to create a thread.

Extending Thread class

The first way is to create your class, which inherits from Thread class:

public class FirstThread extends Thread {

@Override

public void run() {

System.out.println("Heellooo!");

}

}

// Creating Thread

Thread thread = new FirstThread();In this case, you have to override run() method - it will be executed in a thread. Extending the Thread class is not good practice. Note that in Java each class can extend only one class. When you extend the Thread class, after that you can’t extend any other class which you required. Additionally, extends in Java is used to add/modify some functionality from a subclass. In this case, we do not add/modify anything to Thread class.

Implementation of the Runnable interface

The second way is to create a thread using the Thread’s constructor, which accepts the object implementing the Runnable interface:

public class FirstThread implements Runnable {

@Override

public void run() {

System.out.println("Heellooo from Runnable :)");

}

}

// Creating Thread

Thread thread = new Thread(new FirstThread());This time the body of the thread is the implementation of the run() interface method (the thread will run this method and will work until it is done). Note that you can create a thread using anonymous classes:

Thread newThread = new Thread(new Runnable() {

@Override

public void run() {

System.out.println("Hello from the thread!");

}

});Additionally, the Runnable interface is a function interface. Therefore, this can be simplified by using lambda expressions:

Thread newThread = new Thread(() -> System.out.println("Hello from the thread!"));Creating threads in ExecutorService

ExecutorService is an interface that we do not have to implement on our own, the Java provides us with ready-made implementations. Thanks to that we can easily create a thread or a pool of threads, which will work according to our expectations. The most important methods that this interface provides are:

submit(Runnable task) - allows you to send ‘task’ (implementation of the Runnable interface) to be executed (note: we have no guarantee that it will be started immediately! It depends on the current status of ExecutorService, task queue, available threads, etc).

shutdown() - allows you to finish threads correctly, previously completing all tasks and releasing all resources. Calling this method is required before the application is finished!

A detailed explanation of how ExecutorService works and examples will be presented in the next post.

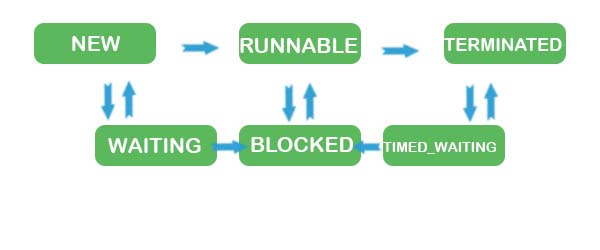

The Java thread’s life cycle

Creating a thread is just the beginning. Each thread has its life cycle. Threads can be in one of six states. Permissible states of the thread are in the Thread.State enumeration class:

- NEW - a new thread that has not yet been launched,

- RUNNABLE - a thread that can execute its code,

- TERMINATED - a thread that has ended,

- BLOCKED - thread blocked, waiting for the release of the shared resource,

- WAITING - a thread goes to wait state once it calls

wait()on an Object. Once a thread reaches waiting state, it will need to wait till some other thread callsnotify()ornotifyAll()on the object. - TIMED_WAITING - a thread is waiting for a certain time.

The change from the NEW state to the RUNNABLE state is made after calling the start() method on the thread instance. Only then the thread can be executed. Each thread can be run exactly once - the start() method can be called on it only once. Otherwise, the IllegalThreadStateException exception will be thrown.

Synchronization in Java

Race Condition

You already know that threads share the address space. I’ve described it in the subsection #concurrent-data-processing. It has very important consequences. See the example below:

class Counter {

private int value;

public void increment() {

value++;

}

public int getValue() {

return value;

}

}

public class RaceCondition {

public static void main(String[] args) throws InterruptedException {

Counter counter = new Counter();

Runnable r = () -> {

for (int i = 0; i < 50000; i++) {

counter.increment();

}

};

Thread t1 = new Thread(r);

Thread t2 = new Thread(r);

Thread t3 = new Thread(r);

t1.start();

t2.start();

t3.start();

t1.join();

t2.join();

t3.join();

System.out.println(counter.getValue());

}

}As you can see I used a Thread.join() method. This method ensures that the current thread waits for the end of the thread on which the join was called. the default main thread waits for the end of the t1 thread, when t1 ends - waits for t2 to end, then it waits for t3 end (the order may be different).

There are three threads in the above code, each of them 50 000 times increment the value of the variable by 1, so the finish counter value should be 150 000, right? Try to run this code several times. What results do you get? In my case, the results were returned:

- 111772

- 102556

- 132565

- 92146

What you’ve seen above is race condition. This happens if several threads at the same time modify a variable that is not adapted to such a parallel change. But why did the value attribute have such different values? This is because the value++ operation (value = value + 1) is not an atomic operation.

An atomic operation is an operation that is indivisible. An atomic operation is performed by a single instruction in the bytecode (in a compiled class).

The execution of value++ (value = value + 1) operation consists of several steps:

- Get the current value to a temporary variable (not visible in the source code),

- Add 1 to the temporary variable,

- Assigning an increased value to value variable.

In the previous subsection, I described time-slicing. It plays a key role here. Imagine a situation in which the T1 thread execute steps 1, 2 and 3 and was context-switching started. Then threads T2 and T3 took step 1. Then thread T2 took steps 2 and 3. After a while, the same happened to thread T3. As a result, threads overwrite outdated values. One of the scenarios is shown in the table below:

| Operation | Thread | Step | Value variable | Temp variable value |

|---|---|---|---|---|

| 1. | T1 | 1. | 0 | 0 |

| 2. | T1 | 2. | 0 | 1 |

| 3. | T1 | 3. | 1 | 1 |

| 4. | T2 | 1. | 1 | 1 |

| 5. | T3 | 1. | 1 | 1 |

| 6. | T2 | 2. | 1 | 2 |

| 7. | T2 | 3. | 2 | 2 |

| 8. | T3 | 2. | 2 | 2 |

| 9. | T3 | 3. | 2 | 2 |

In the example above, operation 9. sets the value to 2 in the T3 thread ignoring the increase in value made by the T2 thread in operation 7. To avoid race condition, it is necessary to synchronize the threads.

Java Threads Synchronization

In general, thread states are intuitive. The descriptions in the previous section help you understand what happens to a thread in a given state. Well, maybe apart from the BLOCKED state. When is the thread BLOCKED?

A thread that is in the BLOCKED state is waiting for a blocked resource. In Java, blocking is done with monitors, which are used to synchronize the threads. Each object in Java is associated with a monitor, which a thread can lock or unlock. The monitor can only be blocked by one thread at a time. Thanks to this, objects are used to synchronize threads. For this purpose, the synchronized keyword is used.

Synchronized block

With a synchronized block, you can be sure that everything inside the block is running on up to one thread at a time. Try to run the modified example from the previous subsection several times:

class Counter {

private int value;

public void increment() {

synchronized (this) {

value++;

}

}

public int getValue() {

return value;

}

}Each time the application returns the correct result - 150 000.

Synchronized method

You can also use the synchronized keyword for the method:

public synchronized void increment() {

value ++;

}In practice, both versions of the increment method are equivalent. Marking the method with the synchronized keyword is equivalent to placing the whole body of the method in the synchronized block. Which object is used as a monitor depends on the type of method:

- standard method - a class (this) instance is used as a monitor.

- static method - the whole class is used as a monitor

Remember not to abuse synchronized. The code in a synchronized block can only be executed by one thread (it loses the possibility of concurrent execution), which makes the execution of such a program slower. Use synchronized only in places where it is necessary.

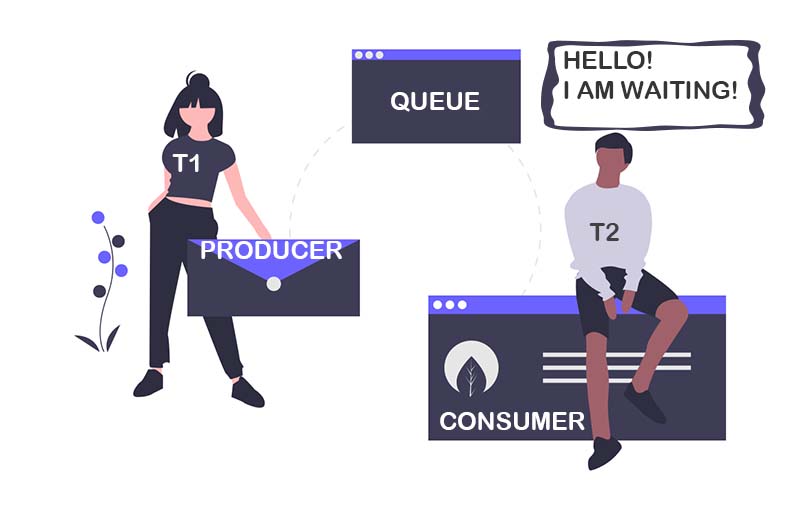

Java Thread in WAITING state

One of the ways to put the thread in WAITING state is to call the Object.wait() method. What the wait() method does? Imagine a situation where you have two threads. One produces some data, the other consumes it (Producer-Consumer Pattern).

The data-consuming thread (T2) uses a while loop, which is executed until the expected number of items is taken from the queue. The program works. However, it has a subtle problem. Consumer thread works all the time. It takes up CPU time continuously! What’s more, for most of its time, it revolves inside the loop, checking if there are any new messages in the queue. How can this problem be solved? One way may be to put the consumer’s thread to sleep using the Thread.sleep() method. This would also be a waste of time - how do you know how long it will take to produce the next message? For this purpose, it is better to use the notification mechanism.

Thread Notification

All Java objects, except monitors, contain a special wait set. The elements of this set are threads that wait for notification about this object. The only way to modify the content of waiting set is to use methods available in the Object class:

Object.wait()- adding the current thread to the waiting set threads,Object.notify()- notification and waking up one of the pending threads,Object.notifyAll()- to notify and wake up all pending threads.

The producer from the previous section should use the notify or notifyAll method to inform consumers of the new message. Consumers should use the wait method so that they can wait for notifications from the producer.

Interruption of the thread

Interruption of a thread is indicated by an InterruptedException exception . A thread can be interrupted when Thread.interrupt method is called on its instance. When a thread is interrupted, a special flag is set on it, which informs about it (Thread.interrupted).

Java Volatile keyword

Java provides another mechanism which is connected to synchronization - volatile. The Java specification says that every read of an attribute preceded by this keyword follows its writing. In other words, the volatile modifier ensures that every thread reading a given attribute will see the latest saved value of that attribute. However, you have to watch out for modifications that are not atomic - unfortunately, volatile will not protect you. In this case, you will need the synchronization described earlier.

Volatile --> Guarantees visibility and NOT atomicity

Synchronization (Locking) --> Guarantees visibility and atomicity (if done properly)

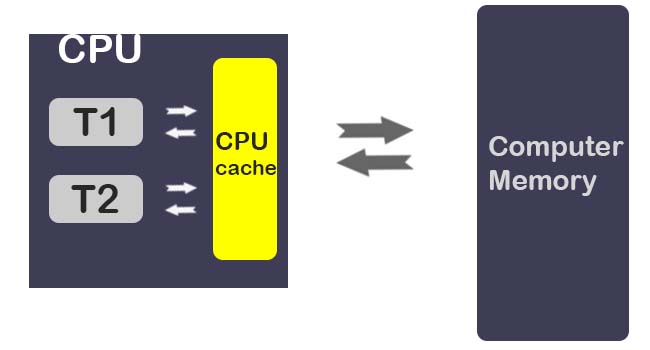

You’re probably wondering what this mechanism is for and what the risk is not using volatile. Imagine a multi-threaded application on a single-core processor:

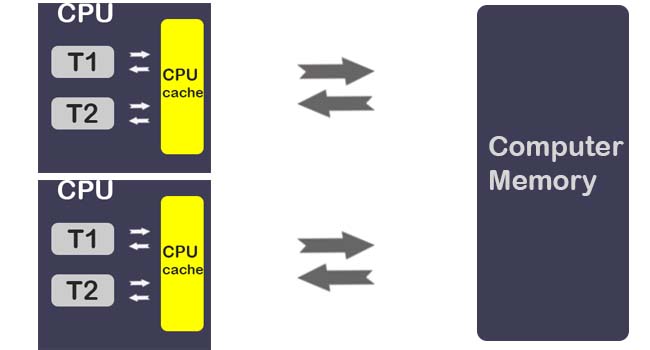

The variables are copied from the main memory to CPU cache (due to a much faster access to the CPU cache compared to RAM access time), Now the threads access the memory from the CPU cache rather than computer memory to save time and increase the performance. There’s nothing dangerous here. Let’s go to the 2-core processor:

In Multi-CPU computer each thread may run in different CPUs, which means, that each thread may copy the variables into the CPU cache of different CPUs. Imagine a situation in which two or more threads have access to a shared object which contains a value variable from the previous point:

class Counter {

public int value;

}Now, T1 and T2 can have different CPU cores. When T1 will make changes to value variable - the changes have occurred in CPU cache and there is no guarantee that every change in CPU cache will be reflected to the Main Memory immediately. So when T2 tries to read the updated value variable value from the main memory, T2 may or may not find the updated value.

To protect from this problem, just use the volatile keyword.

volatile is keyword used with variable to make sure the value are read from and written to main memory rather than CPU cache.

This ensures that you get the “really” correct value of the variable.

Summary

As you can see, there are many thread synchronization mechanisms available in Java. Please take a look at them to better decide which ones to use when. If you want to know more about concurrency, there will be a post about ExecutorService soon!